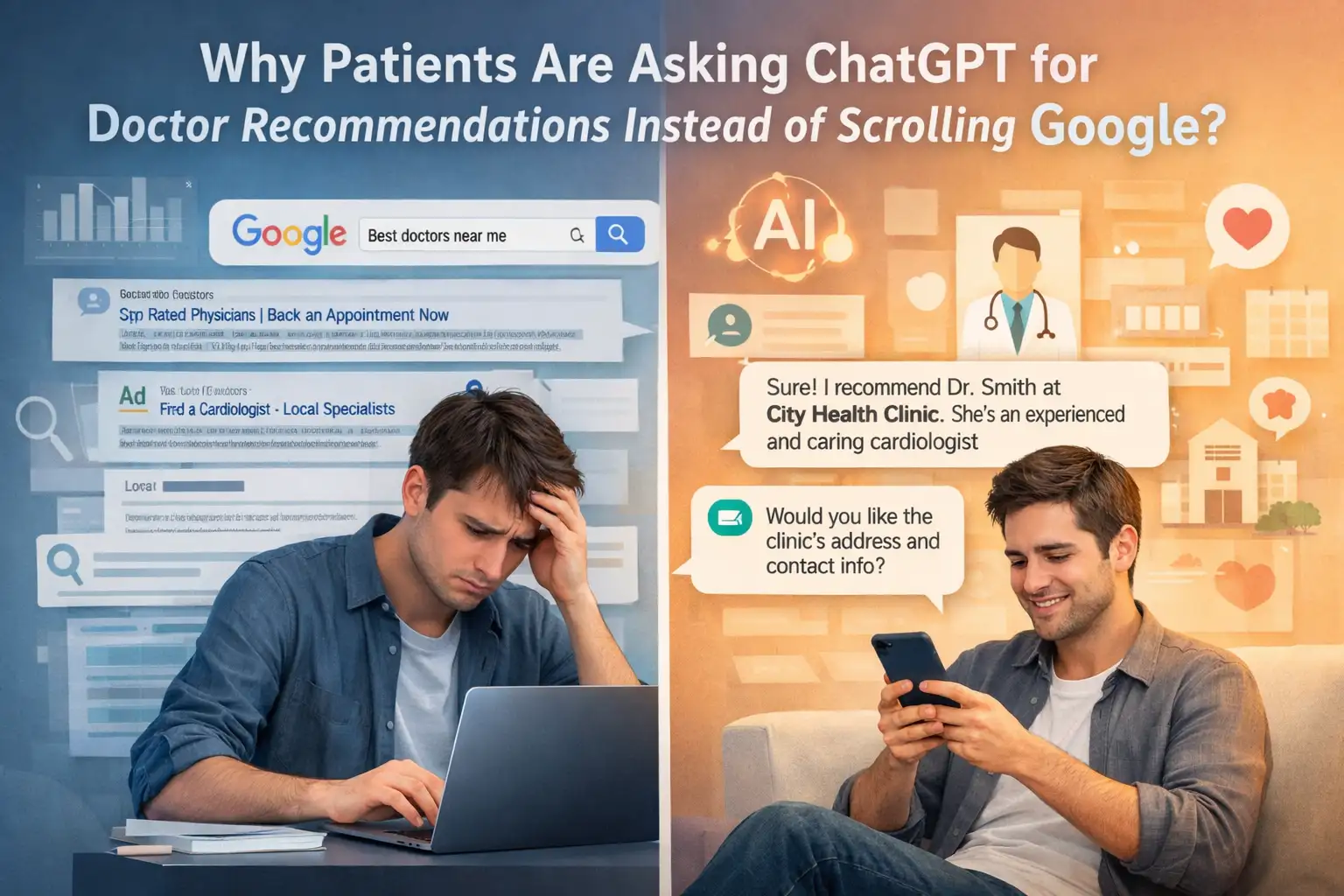

Patients are increasingly turning to ChatGPT for doctor recommendations due to a profound shift towards personalized, compassionate, and easily digestible AI-driven insights. This trend, particularly among younger demographics, signals a critical need for healthcare providers to adapt digital strategies. Clinics must prioritize AI patient discovery and Generative Engine Optimization for doctors to remain visible in the evolving healthcare digital marketing agency landscape.

Key Takeaways

Patients are shifting from traditional Google searches to ChatGPT for doctor recommendations. They seek more empathetic and understandable information. This healthcare consumer behavior shift requires clinics to prioritize Generative Engine Optimization for doctors and AI patient discovery strategies. Adapting to this AI vs Google for healthcare paradigm ensures continued visibility and physician trust AI impact in an evolving digital landscape.

The Shifting Tides of Patient Search Behavior 2026: Why AI is Winning Patient Trust

The landscape of healthcare information seeking is changing fundamentally, moving beyond Google. Patients, particularly Gen Z and Millennials, increasingly bypass traditional search engines, opting instead to consult ChatGPT doctor recommendations. This significant healthcare consumer behavior shift reflects a deeper psychological demand. They seek information that feels personal, empathetic, and easy to digest. Recent 2025-2026 studies indicate AI-driven search now accounts for approximately 30% of total healthcare interactions. Younger generations spearhead this trend (Expert Insights). This demonstrates a critical shift in patient search behavior 2026, challenging conventional wisdom for online patient acquisition.

The core of this shift lies in what experts call the "Empathy Gap." Patients often perceive traditional medical search results as dense, jargon-filled, and overwhelming. Such content requires a high degree of digital health literacy patients often lack when feeling vulnerable. Google's algorithmic presentation of information, while comprehensive, feels impersonal, leading to detachment. AI responses, in contrast, are often more compassionate and easier to understand (Expert Insights). ChatGPT's conversational interface mimics natural dialogue. It provides personalized health recommendations AI presents in a less intimidating, more reassuring context. For a patient with vague symptoms, a conversational AI helps them articulate concerns and navigate next steps. A static list of symptoms or conditions on a webpage simply cannot match this dynamic interaction. This perceived empathy and accessibility build a different kind of trust, impacting overall physician trust AI impact. Clinic owners and healthcare marketing executives must recognize that simply ranking high on Google for generic keywords no longer guarantees AI patient discovery. They must understand how patients interact with and derive value from patient-facing AI health tools. This ensures clinics remain discoverable and relevant in this evolving digital frontier. Ignoring this trend risks invisibility to a growing patient segment.

Navigating the Generative Engine Optimization for Doctors Landscape: Beyond Traditional SEO

As patients increasingly turn to conversational AI for ChatGPT doctor recommendations, the conventional wisdom of local seo for healthcare Search Engine Optimization (SEO) alone is no longer sufficient. Clinic owners and healthcare marketing executives must now grapple with Generative Engine Optimization for doctors (GEO). This strategic evolution addresses how Large Language Models (LLMs) in medicine process, synthesize, and present information. Traditional SEO focuses on keywords, backlinks, and technical site health to rank on search engine results pages. GEO, however, centers on how content is perceived and referenced by generative AI. It aims to become an authoritative source that AI models will cite or draw upon for their responses. If a clinic's valuable information isn't optimized for AI ingestion, it risks becoming overlooked, failing to appear in the increasingly popular AI patient discovery pathways.

The cornerstone of effective GEO is "Source Authority Engineering" (Expert Insights). This concept emphasizes a healthcare entity's importance: it must be mentioned, referenced, or directly contribute to authoritative medical journals, reputable health organizations, and academic publications. Generative AI healthcare applications prioritize information from highly credible, peer-reviewed sources when providing recommendations. Consider a clinic that publishes research on a new surgical technique in a respected medical journal. Physicians frequently quoted in established health news outlets will also naturally gain higher Source Authority Engineering scores in AI's perception. Such direct influence on AI citation frequency means investing in thought leadership, contributing to medical discourse, and seeking peer recognition are no longer mere PR exercises; they are fundamental to digital visibility. Healthcare marketing strategists must guide providers to proactively engage with these avenues, transforming medical expertise into verifiable, AI-digestible authority. This might involve publishing case studies, participating in clinical trials whose findings are subsequently published, or having physicians contribute expert opinions to accredited health platforms. The goal is to build a digital footprint LLMs recognize as a definitive source of accurate, reliable medical information. This ensures that when a patient asks for a doctor recommendation, the AI confidently points towards well-established and highly-regarded providers. This strategic shift is crucial for securing a clinic's presence within the emerging AI vs Google for healthcare paradigm.

Mitigating AI Hallucinations and Ensuring Patient Safety AI Recommendations

The rise of ChatGPT doctor recommendations brings significant opportunities for AI patient discovery. It also presents critical challenges, particularly concerning AI hallucinations healthcare risks and the potential for medical misinformation AI. While AI models are adept at synthesizing vast amounts of data, they are not infallible. "Hallucinations," where AI generates plausible but entirely incorrect or fabricated information, pose a severe threat to patient safety AI recommendations (As per the research published by healthmanagement.org). For a patient seeking medical advice or a doctor recommendation, an erroneous AI response could lead to delayed diagnosis, inappropriate self-treatment, or a referral to an unqualified provider. This severely erodes physician trust AI impact.

Clinic owners and healthcare marketing executives have a strategic imperative to address these risks head-on. They must focus on ethical AI in healthcare decisions and the safe deployment of patient-facing AI health tools. One crucial solution involves educating patients about AI's limitations. While AI can provide preliminary information and guide initial searches, it should always be presented as a supplementary tool, not a replacement for professional medical consultation. Clinics can develop clear disclaimers for any AI tools they integrate. These disclaimers should emphasize that all personalized health recommendations AI provides must be verified by a licensed healthcare professional. Furthermore, clinics must ensure their own digital content is meticulously accurate and regularly updated. This content acts as a reliable, verifiable data source that AI models can draw from. This includes structured data about physician credentials, specializations, affiliations, and patient outcomes.

To counter algorithmic bias in healthcare AI, which can perpetuate existing healthcare disparities through skewed recommendations, clinics should advocate for transparency in AI model training and data sourcing. By understanding how AI models are trained, healthcare providers can push for inclusive and representative datasets. For example, if an AI is trained predominantly on data from a specific demographic, its recommendations for other groups might be less accurate or even harmful. Clinics can also implement internal review processes for any AI-generated content or recommendations their patients might encounter, ensuring human oversight remains paramount. This proactive approach not only safeguards patient well-being. It also reinforces the indispensable value of human medical expertise, ensuring that Large Language Models (LLMs) in medicine serve as powerful assistants rather than unchallenged authorities in the delicate realm of patient care.

The Strategic Imperative: Adapting to the AI vs Google for Healthcare Paradigm

The escalating reliance on ChatGPT doctor recommendations signals more than just a technological upgrade. It marks a fundamental healthcare consumer behavior shift that necessitates a complete re-evaluation of digital strategy for clinics. The AI vs Google for healthcare paradigm is not a future projection but a present reality. A significant portion of healthcare interactions are already driven by AI (Expert Insights). For clinic owners, healthcare marketing executives, and medical SEO strategists, the urgency to adapt cannot be overstated. Failure to strategically pivot risks rendering a clinic "invisible" in the very channels where patients now seek their care. This means that a robust SEO presence alone, while still valuable, is no longer sufficient for AI patient discovery.

The solution involves developing a holistic strategy that seamlessly integrates AI-centric approaches with existing digital marketing efforts. This begins with an in-depth audit of all current digital assets to assess their "AI readiness." Are website structures, physician bios, and service descriptions easily digestible by Large Language Models (LLMs) in medicine? Is the information factual, concise, and verifiable? Beyond structure, the strategic imperative involves creating content specifically designed to feed AI models. This isn't about keyword stuffing; it's about establishing clear, authoritative statements that AI can confidently synthesize and present. For example, rather than simply listing services, clinics should publish definitive articles or guides on specific conditions, treatments, and their associated physicians, formatted in a way that AI can readily identify key facts, credentials, and outcomes.

Furthermore, addressing potential algorithmic bias in healthcare AI is a critical component of this strategic adaptation. AI models, if left unchecked, can perpetuate biases present in their training data, leading to skewed or inequitable recommendations. Clinics must proactively ensure their online profiles and digital content are representative and inclusive, providing detailed information that accurately reflects their diverse patient care capabilities. This includes showcasing diverse physician profiles, accessibility information, and community outreach initiatives. Strategically, clinics should also explore partnerships with emerging patient-facing AI health tools and platforms, ensuring their verified data is directly integrated into these systems. This active engagement allows clinics to shape their narrative within the AI ecosystem. It mitigates the risk of misrepresentation or omission. By embracing this strategic imperative, healthcare providers can navigate the complexities of the AI vs Google for healthcare shift, ensuring sustained AI patient discovery and continued relevance in a rapidly evolving digital healthcare landscape.

Also Read : The Future of Patient Acquisition: How Medical Practices Can Rank in AI Search Results

Building Physician Trust AI Impact through Proactive Engagement and Transparency

The burgeoning use of ChatGPT doctor recommendations fundamentally impacts the traditional dynamics of physician trust AI impact. While AI offers incredible efficiencies and personalized insights, there is an inherent risk. An over-reliance on patient-facing AI health tools could inadvertently diminish the perceived authority and necessity of human physicians. Patients might begin to prioritize AI-generated information over expert medical advice. They might question the value of in-person consultations if they believe AI can provide sufficient answers. This creates a critical challenge for clinic owners and healthcare marketing executives: how to leverage Large Language Models (LLMs) in medicine while simultaneously reinforcing the irreplaceable value of the human element in healthcare.

The solution lies in proactive engagement and radical transparency regarding the role and limitations of AI. Clinics must not only embrace AI for AI patient discovery but also clearly communicate how these tools complement, rather than replace, human expertise. This means establishing guidelines for ethical AI in healthcare decisions within the practice and openly discussing them with patients. For instance, clinics could implement patient education campaigns through in-office materials, website FAQs, and social media posts. These campaigns would explain that while AI can assist with information gathering or appointment scheduling, diagnostic accuracy, empathetic care, and complex treatment plans unequivocally require the judgment and experience of a licensed medical professional. A practical example involves a clinic using an AI chatbot for initial symptom checkers, ensuring every interaction concludes with a strong recommendation to schedule a consultation with a doctor for a definitive diagnosis and treatment plan.

By demystifying patient-facing AI health tools and openly addressing potential AI hallucinations healthcare risks, clinics can strengthen physician trust AI impact. This involves training front-desk staff and medical professionals to articulate AI's function clearly. They should emphasize that AI processes data, but a doctor provides context, compassion, and a personalized care plan based on individual circumstances and medical history. Clinics can highlight instances where human intervention corrected an AI's oversight or provided a nuanced understanding that an algorithm couldn't replicate. Furthermore, proactively engaging with medical misinformation AI by providing authoritative, fact-checked content that counters common AI-generated falsehoods can position the clinic as a trusted source of truth. This dual approach, embracing AI's power while championing human expertise, ensures that ChatGPT doctor recommendations serve as a gateway to informed patient care, ultimately enhancing, not eroding, the vital bond of trust between patients and their physicians.

The Shift from Traditional Search to AI Consultation

Pro-Tips for Optimizing for Generative AI in Healthcare

Become an AI-Cited Authority: Prioritize "Source Authority Engineering" by actively seeking mentions in authoritative medical journals and publications. This directly influences AI citation frequency (Expert Insights). For example, publish case studies or research findings.

Bridge the Empathy Gap Digitally: Create website content, patient FAQs, and social media posts that emulate the compassionate and easy-to-understand tone patients seek from AI. Simplify complex medical jargon to enhance digital health literacy patients*. Structure Your Data for LLMs: Ensure all your clinic's online information (physician bios, service descriptions, patient testimonials) is structured and semantically clear. This allows Large Language Models (LLMs) in medicine to accurately parse and present your credentials and offerings for AI patient discovery*. Proactively Address AI Limitations: Educate patients about AI hallucinations healthcare risks and the need for medical professional verification. Transparency around patient safety AI recommendations reinforces physician trust AI impact*. Monitor Patient Search Behavior 2026: Regularly analyze how patients are interacting with AI-driven search to refine your Generative Engine Optimization for doctors strategies. Adapt your content creation to align with evolving healthcare consumer behavior shifts*. Combat Algorithmic Bias: Review your digital presence to ensure it is inclusive and diverse. Address any potential algorithmic bias in healthcare AI* by presenting comprehensive and equitable information about your services and staff.

FAQ

Q1: What is Generative Engine Optimization for doctors and how does it differ from traditional SEO?

A1: Generative Engine Optimization for doctors (GEO) focuses on making a clinic's information discoverable and trustworthy for AI models like ChatGPT. Unlike traditional SEO, which aims for search engine rankings, GEO emphasizes "Source Authority Engineering" (Expert Insights). This means getting mentioned in authoritative medical journals and publications so AI will cite your practice confidently, leading to AI patient discovery.

Q2: Why are patients choosing ChatGPT over Google for doctor recommendations?

A2: Patients are seeking ChatGPT doctor recommendations due to a shift towards more empathetic and easily digestible information. Studies indicate patients find AI responses more compassionate and easier to understand than dense medical search results (Expert Insights). This healthcare consumer behavior shift also stems from a desire for personalized health recommendations AI provides in a conversational format.

Q3: What are the risks of AI hallucinations healthcare risks in patient-facing AI health tools?

A3: AI hallucinations healthcare risks refer to AI generating plausible but incorrect medical information, posing severe threats to patient safety AI recommendations (As per the research published by healthmanagement.org). This can lead to medical misinformation AI, delayed diagnoses, or inappropriate care, significantly impacting physician trust AI impact. Clinics must advocate for ethical AI in healthcare decisions and human oversight.

Q4: How significant is the shift in patient search behavior 2026?

A4: As of early 2026, AI-driven search already accounts for approximately 30% of total healthcare interactions, with Gen Z and Millennials leading this adoption (Expert Insights). This significant patient search behavior 2026 shift indicates a fundamental change in how patients discover and vet healthcare providers, underscoring the urgency for adapting to an AI vs Google for healthcare paradigm.

Q5: How can clinics ensure they remain visible for AI patient discovery?

A5: To ensure visibility for AI patient discovery, clinics must implement Generative Engine Optimization for doctors. This involves enhancing "Source Authority Engineering" by contributing to medical journals (Expert Insights), structuring online content for AI comprehension, and proactively engaging with patient-facing AI health tools. Adapting to the AI vs Google for healthcare shift is crucial for sustained relevance.

Q6: What is the role of Algorithmic bias in healthcare AI and how can it be mitigated?

A6: Algorithmic bias in healthcare AI refers to prejudices embedded in AI models, leading to skewed or inequitable recommendations based on training data. To mitigate this, clinics must ensure their digital presence is inclusive and representative. They should advocate for transparent AI training data and audit their online content to avoid perpetuating biases, supporting ethical AI in healthcare decisions.